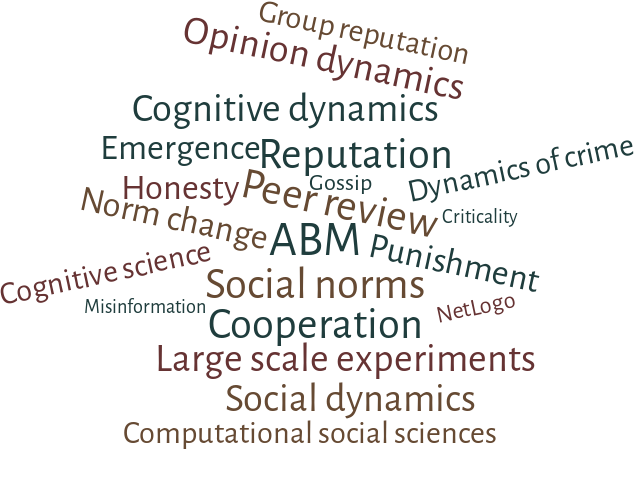

We are a highly interdisciplinary research group working at the intersection among cognitive, social and computational sciences. LABSS is based at the Institute of Cognitive Sciences and Technologies (ISTC) of the National Research Council of Italy (CNR) and aims to foster an explorative approach to Agent Based Modeling and Simulation.

In today’s increasingly digitalized society, interactions between humans and artificial agents are becoming ever more frequent and complex. These interactions have the potential to significantly enhance human experiences, offering unprecedented opportunities for innovation and efficiency. However, they can also lead to frustration and pose severe threats to human dignity and autonomy. As Artificial Intelligence (AI) agents take on roles as advisors, delegates, and cooperative partners, we face a critical need to understand how these roles are accepted within our emerging hybrid societies.

Read more

Using the Agent Based Modeling methodology, LABSS and 'Università degli Studi di Napoli L'Orientale' organized a course "Simulate the past: agent models for the study of ancient societies". The couirse provides the theoretical and practical basis for the application of agent models for the study of ancient societies starting from archaeological data.

Changes in social norms during the early stages of the COVID-19 pandemic across 43 countries

Read more

In the last months researchers of the LABSS have won various national and international research grants. Some of the new projects we are working on are about fostering open science (FOSSR), future AI research (FAIR), reducing, reusing, and rethinking packaging (R3PACK), dynamics of social norms under collective risk (DYNOSOR), the behavioural immune system and social conformity (BISSCo), cooperation and brain-synchrony (COBRA), and security and rights in Cyberspace (SERICs).

FOSSR and FAIR received funding by the Italian Ministry of University and Research as part of the National Recovery and Resilience Plan (PNRR), R3PACK is funded through a European Horizon grant, and DYNOSOR, BISSCO, COBRA, and SERICs are funded through the Italian PRIN grant scheme. Click here for more information about these projects.

In the upcoming weeks and months we will open various calls for postdoc positions related to these projects. Keep an eye out for updates or contact the LABSS researchers working on these projects for more information.

COBRA Project

COoperation and BRAin-Synchrony: a multiscale and translable approach – Funded under the PRIN PROGETTI DI RICERCA DI RILEVANTE INTERESSE NAZIONALE (2022-2024)

Read more

FAIR Project

Extended Partnership on Artificial Intelligence “Future AI Research” (FAIR), funded by the Ministry of University and Research as part of the National Recovery and Resilience Plan (PNRR) (2023-2026).

Read more

Anxo Sanchez. In this talk I will present the results of a study on networks of relationships we have been conducting with high schools since 2018. Our rich dataset allows us to answer both structural and dynamical (longitudinal) questions.

Read more

Sergey Gavrilets. Understanding and predicting human cooperative behavior and beliefs dynamics remain a major challenge both from the scientific and practical perspectives. Because of the complexity and multiplicity of material, social and cognitive factors involved both empirical and theoretical work tend to focus only on some snippets of the puzzle.

Read more

From the 2nd until the 6th of October Sergey Gavrilets and Anxo Sanchez visited the Labss.

Read more

Enabling imitation‑based cooperation in dynamic social networks.

Read more